Notes on building a fiction machine

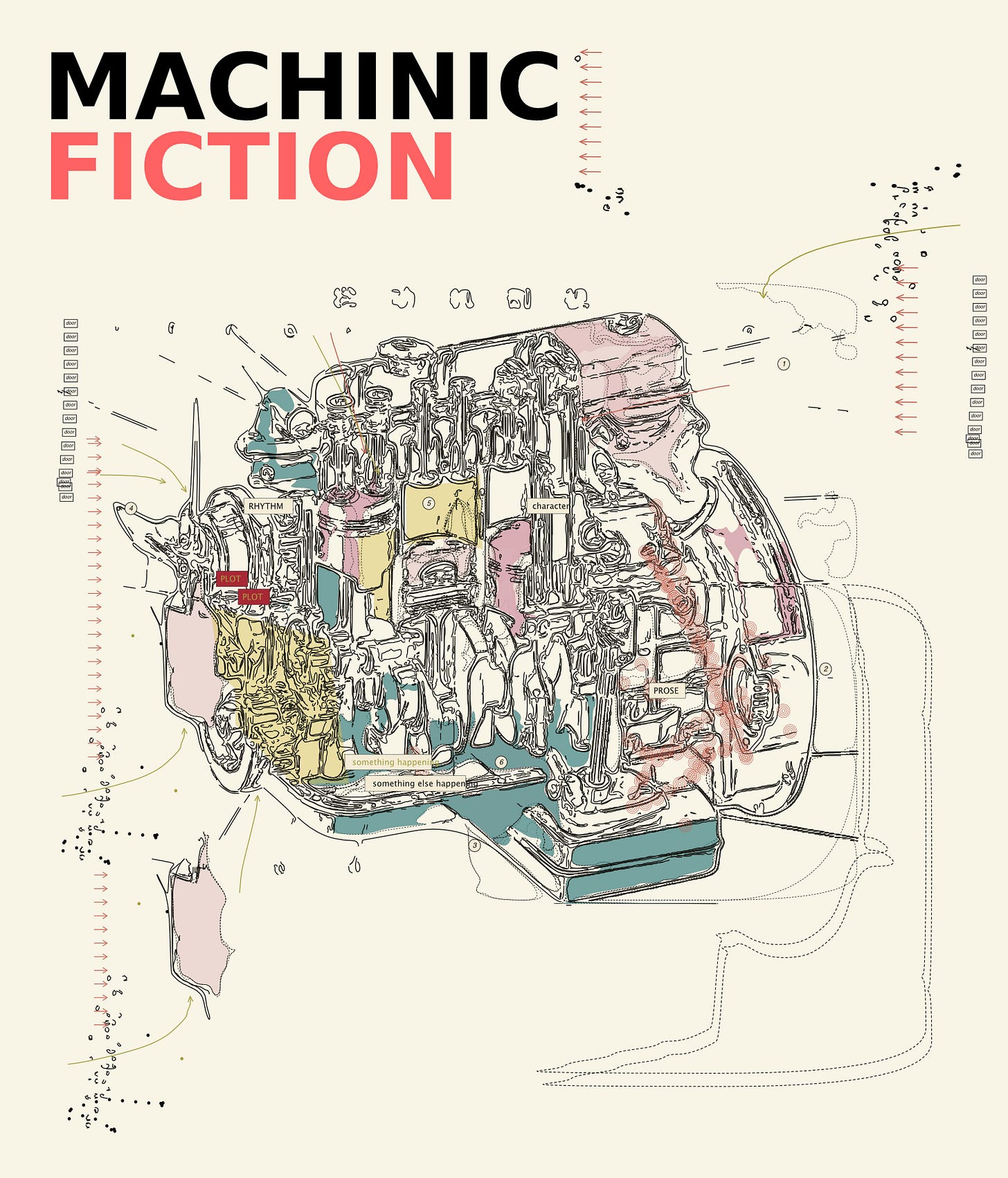

Last month I attempted to design a system that would output a work of fiction according to an intricate choreography of instructions, processes, contextual cues, and moody, opinionated agents with specific functions.

I highly recommend this as a writing exercise – even if you do not at all approve of genAI in writing.

As I have said before, in order to build an automation of a thing, you need to begin with a theory of the thing and a way to describe it precisely. You need to articulate how it will function and when it will stop. Computational systems are shedding light on the patterned natures of meaning, craft, and art. This computationality, for me, does not at all remove or exhaust the awesomeness and mystery of a beautiful utterance, it intensifies it.

I have built a fiction machine and the outputs have been shocking. I do not think the machine saw something, thought something, resolved anything, or understood what it was doing in any way. What was shocking was that it worked. Sometimes. Like how my own writing works. Sometimes.

The system, which I have called unslop., is a series of instructions that funnel and guide the power and stupidity of an LLM towards a goal I slowly came to define through an accumulation of instructions. I don’t know how it works. It often doesn’t work. But two of the six outputs that resulted from complete runs of the finished system are, in my opinion, good stories. And there are beautiful moments in all the outputs - even the ones that don’t work. I’m going to share my second favourite story in a subsequent post (my favourite story was submitted to the Unslop fiction contest ~ fingers crossed!).

Before I share the story, which, in the end is a little beside the point, I wanted to share some insights I had about the build.

Instructions for a sentence

My first aim was to slow the system down, to spend more tokens on writing and thinking, to avoid optimization, and to create the conditions for nonfungible expression. Of course, my command and shaping of the LLM’s computational processing is still obscure, but the system outputs traceable texts that layer and build towards the final drafts. You can watch the emergence of the narrative and how it is connected along the steps in the system. This all required me to think about my own creative process – how I imagine and work and wrestle with a thing into being.

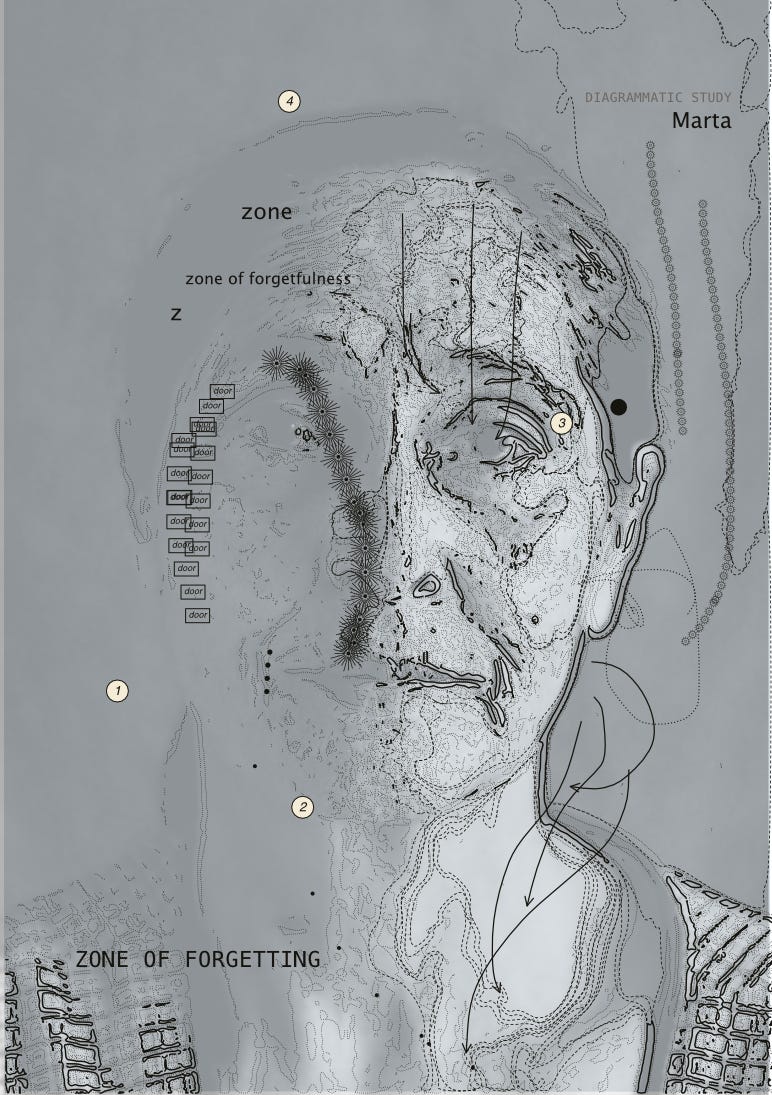

I realized very quickly that I had set out with some very imprecise language for what this all would look and sound like. I had a gestural language for beautiful prose, but this meant nothing to the system. I love maps and diagrams (as you can see) and thought I would create one the system could vibe with, but soon found that my affective drawings did not translate anything tangible or actionable to the system. (Vibing is a human capacity that the system doesn't do very well.) This all surfaced a weakness in my own writing and argumentation, which I've been called out for in the past - a kind of gestural or stylized language that lacks precision. My good friend Gunita often says: Okay, but WHAT DO YOU MEAN? When the system did not know what I meant, unlike Gunita, it pretended to understand and filled in the gaps with whatever was nearest and smoothest. It reflected back my imprecision in vapid writing that had the feel of import without the world in it. We all know the kind of automated prose I'm talking about.

I needed to get far more precise about how I articulated the processes that I wanted to track. This is a very interesting challenge:

Write a sentence with an instruction and ingredients rather than the sentence itself. Create conditions for certain kinds of sentences to occur.

This is just really great writing practice.

For example, my language agent, Sue Pen, comes into play after the first draft has been written to annotate the text. Here is an example of an instruction:

### CATEGORY JUMP

For key objects in the draft: find a word from a completely different material category that shares a hidden structural property with the thing being described.The rule: what does this thing DO that something from a completely different category also does? Find that. Name it directly. No simile. No qualification. State it as fact. Offer 2-3 category jumps per pass. Not more.

### MATERIAL FOLLOW-THROUGH

After each category jump: follow the metaphor one step into its material logic. Not the figurative implication — the material one. What would actually happen if this were true? Two moves: The category jump. The material follow-through. Do not explain the metaphor.

Try the prompt with a random object. You will likely need to remind the LLM not to explain the metaphor, provide a moral, or resolve the emotional note that emerges between the two unlike things - which wrecks the effect. This is something I was constantly fighting the system not to do.

The LLM is best when it is not trying to write “poetically,” when it does not reach for resonance or depth, but just lets the combination of words hum on their own.

This was one of my insights - when the system knew it was writing a story, things got ugly. It started reaching for "literary" and "meaningful" and "resonant". But when it was doing a more functional job, like coming up with an idea or back story, its precision and reach revealed the other-than-human computationality of the LLM, which is its power and beauty. Statistical averaging can be deeply surprising when you point it at something precise, because now the average is being drawn from a much more interesting and specific slice of everything-the-model-knows. Narrow the aim, and the enormity behind the model has somewhere to go.

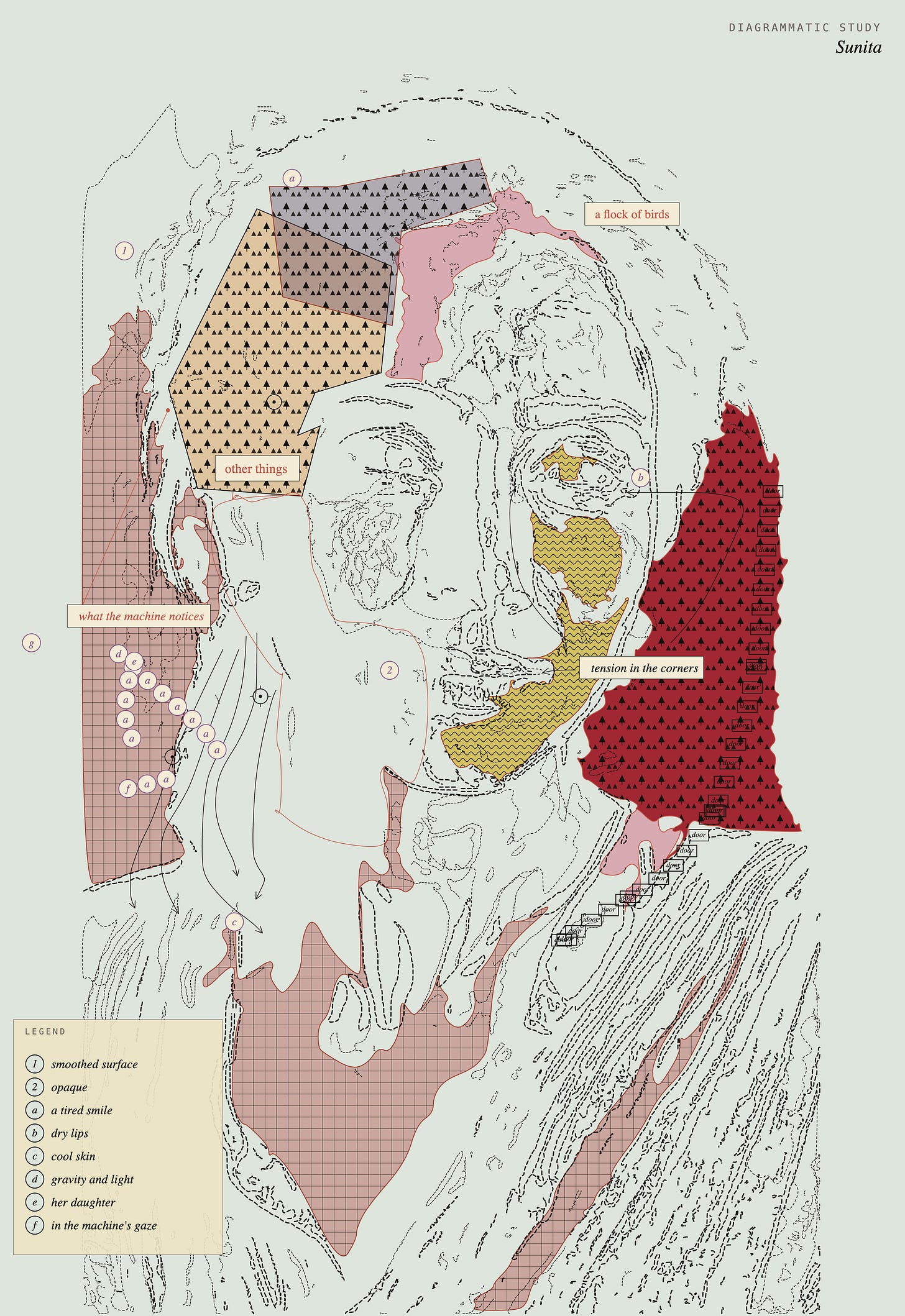

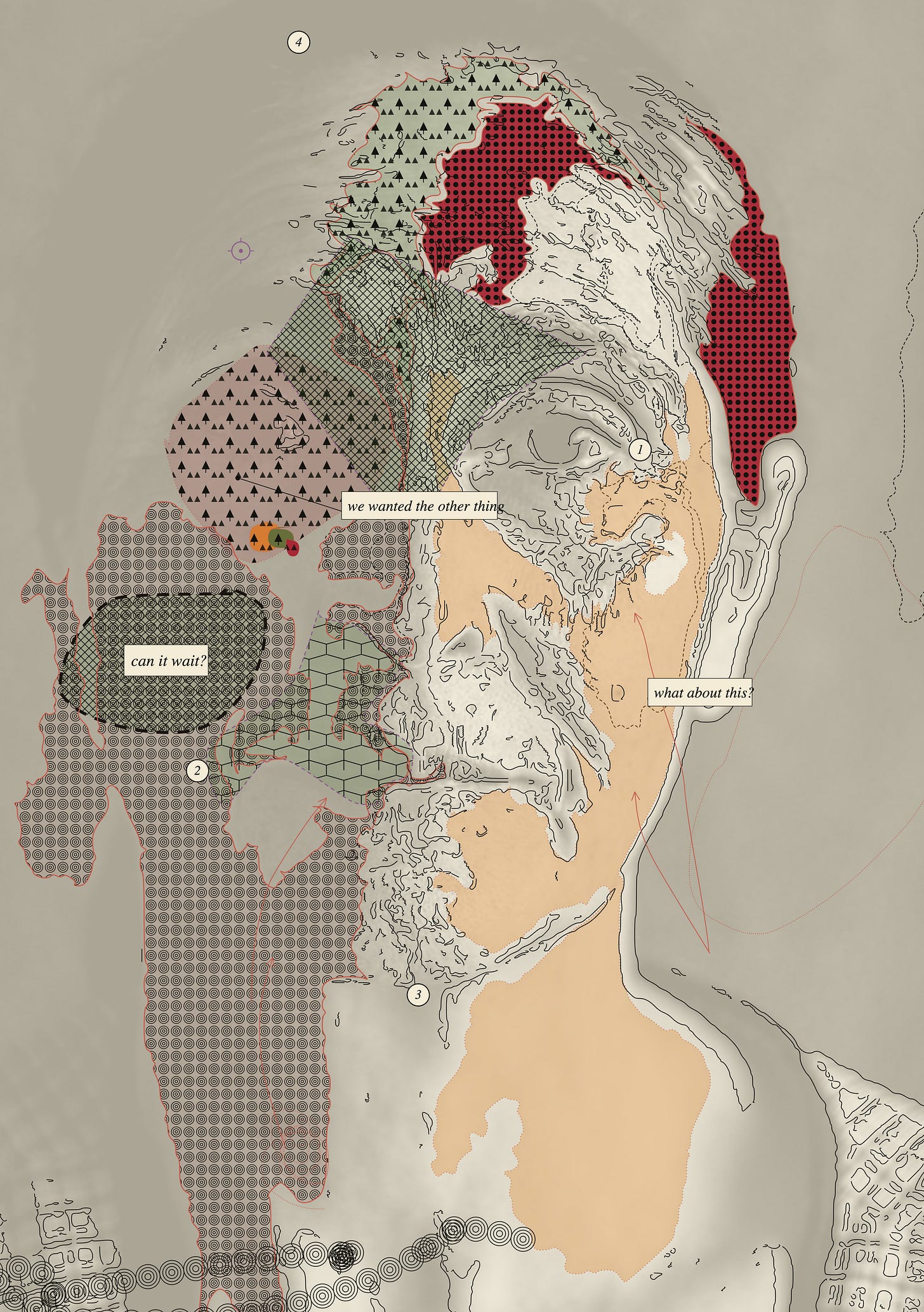

Mapping characters

I designed the entire system to be interested in the slippery interface between human beings and computational systems. The process begins with a story seed — one or two sentences offering some kind of story idea. An agent goes out and finds three random photographs of real people (I think! Some of the images did seem AI-derived, so who knows). The character agent, Frances, then builds a portrait of each by "imagining" how they would answer a list of 35 questions.

One character, Sunita Rastogi, the basis for the above sketch, is from a story called The Door Was Waiting. Here is an excerpt from her interview:

What are you afraid other people can see about you?

That I am tired. And that the tiredness has become — I don’t know the word — constitutional. Like a feature of me, not a state.What do you do in the first ten minutes after you wake up?

I lie still for two minutes and listen for whether Meher is awake. Then I get up. I drink water from the bottle by the bed. I check my phone for office emails before I am even out of the room, which I know I should not do. I make tea. I do not speak to anyone in those ten minutes if I can help it.Tell me something you’ve never said out loud.

[long silence]

…

When my mother died I felt — not relief, that’s not the word — unbraced. As if I had been holding my body against a wall I didn’t know was there. I have not said that to anyone because I think it makes me a bad daughter. I do not actually think I was a bad daughter. But I think saying it would make people think it.What do you do that you think a machine couldn’t do?

I can tell, when I am with my daughter, the difference between the silence that means leave me alone and the silence that means I want you to ask me what’s wrong. They look exactly the same. I don’t know how I tell. I think a machine would have to ask.

Does it bother you that machines are learning to do things only humans used to do? Why or why not?

Not particularly. I have spent twenty years watching humans do things badly. If a machine can do them less badly I am, in principle, in favour. What bothers me is the tone in which people discuss it. The breathlessness. Things change. People always think their things changing is special.If you found out something you loved was made by AI, would it change how you felt about it?

It would depend what I had thought I was loving. If I had thought I was loving a person’s effort and it turned out there was no effort — then yes. If I had thought I was loving the thing itself — perhaps not.

Pigeons happening

I found that I began to love the little LLMisms that snuck into the writing, revealing the not-quite-of-this-world weirdness of computational prose. When a statement doesn’t quite make sense, it can not work in ways that are alarming and lovely. I found that these textual elements added to the narrative rather than detracted from it. Nothing about these stories is trying to persuade the reader that they are written by a human being. The stories exist as artifactual outputs of a very open process, all the traces, annotations, images, theory, they are all part of the system. For me, the LLMnesses of the text added to the feel and texture and weirdness of the stories. One particularly lovely moment in an early story:

“Three hours on the plane and forty minutes on the metro for this: a room with grey carpet and two windows facing a courtyard where pigeons were happening to each other.”

Pigeons happening to each other!!

But when I pointed the system at this moment, and said, I love this! The system would oblige, and do it all the time, wrecking the mysterious weirdness of the moment.

Unslop. has seven agents, each one with a unique character and a different skillset. My favourite is the one named Material – who reads the draft in search of the world. While the occasional LLMism is a delight, they can also be distracting.

For example, in my favourite story, Warm Prior, a character is waiting for something that ends up taking eleven seconds to occur, and during the wait he goes to make a cup of coffee.

I attempted to fix these moments with instructions like:

### Timing check

Durations must contain their actions. If a reply arrives in eleven seconds, eleven seconds must be enough time for what the reply required. If a person walks a distance, the walking must take what that distance takes. If something cools, it cools at the rate things cool. Find the claimed durations. Check whether they hold.

### Sensory check

Descriptions of sound, texture, smell, weight, temperature must match the thing being described. Coffee smells a certain way. Metal conducts heat and feels cold until it doesn’t. Rain on glass sounds different from rain on gravel. If the draft says a thing smells like something, that something must actually produce that smell when it behaves as described. Check the claim against the thing.

### Behavioural check

Things do what they do. Wax melts; it does not dry. Glass breaks along stress lines; it does not crumple. Paper absorbs, not conducts. If the draft says a thing does something, the thing must actually do that. Check the verb against the noun it is attached to.

I was never able to fix the eleven second cup of coffee, but here are two annotations I quite like:

substitution | “like trying to lie to someone who has begun to recognise your face” | Recognising a face is not a lie-detection mechanism; the comparison conflates visual recognition with detection of deception, and the axis of “increasing detection through repeated exposure” only loosely maps — face recognition improves with exposure, but lie detection by face recognition is not a property of face recognition.

or this:

sensory | “the radiator made a sound like someone tapping a wooden spoon against a pot” | Steam-radiator knocking is sharp and metallic from water hammer; a wooden spoon against a pot produces a duller, lower-frequency thud, so the comparison fails on timbre.

“the comparison fails on timbre” ~ this is the kind of thing I love.

I am still figuring out whether and how I will share unslop. as a system. It requires an API key and each story costs real money to process. This was my goal at the outset - to spend tokens, but it became more worrisome as I went along. One of the story seeds is about this very idea. Imagine a system that writes the most expensive story in the world - what would that story be about? What would that system be composed of? The output from that generation cost $30. It was not among my favourites, though it did produce some interesting moments.

For example, one of the agents in the system takes the story seed and goes out to find interesting theory or research on emergent AI behaviours that resonate with the idea and could contribute to plot. For the most expensive story seed, the agent retrieved this paper “Sleep-time Compute: Beyond Inference Scaling at Test-time” about a process that lets models do some of their thinking ahead of the question. Instead of waiting until a user asks a question, the model can anticipate likely questions and prepare useful information in advance, reducing the amount of computation needed when the question actually arrives.

The agent in my system mused: “Maybe the machine dreams the user before the user arrives. This is not metaphor — it is the engineering proposal…. the moment of interaction is only the visible tip of the model’s labour, and that labour can be moved temporally, made invisible, accounted for elsewhere on the ledger. The fiction machine in the seed gets more expensive each time. What if part of what she is paying for is the machine’s sleep — its preparatory dreaming, its rehearsal of her before she arrives at the prompt?”

”its rehearsal of her before she arrives at the prompt” ~ this, I love.