Machining Friction

On Automating Fiction and Other Inefficiencies

Hyperstition

I don’t remember when or where I first heard about the Hyperstition project—whether it was on Hard Fork or the Dwarkesh Podcast (both of which I listen to, in different ways, to get a sense of what these guys are doing and feeling out there in the tech world as they stomp about in big boots building science fictional futures no one quite asked for), but the idea immediately caught my attention.

A niche critical theory concept had demonstrated itself exactly by becoming a real thing—real enough to discover while cooking dinner on a Tuesday evening, listening to tech news.

The word itself, hyperstition, comes from Nick Land and the Cybernetic Culture Research Unit. It names a phenomenon in which fictions become amplified, circulated, and believed to such a heightened degree that they begin to shimmer into the real and install themselves, meatily, into the world. This is hype that whips itself into a frenzy of actuality.

The term hyperstition (hype + superstition) foregrounds a kind of superstitious or magical thinking, but some have argued that there are more grounded ways to understand the phenomenon. Narratives told and retold create a feedback loop between story, belief, and action; these fictions can organize attention, shape expectations, and ultimately influence outcomes. What makes this dynamic newly urgent, especially in the context of fictions about AI (as many worried around the recent speculative forecasting project AI 2027, which had distinctly dystopian tonalities), is that the audience for these narratives is no longer only human.

LLMs are not just consumers of these narratives—they are educated on them. With their insatiable appetite for data, including fictions about themselves, they ingest our hype and horror, and with this material they spin their outputs into the future real. The idea is that everything written and accessible to the LLM contributes, however diffusely, to the epistemic environment from which they draw synthetic and statistical inspiration.

In this sense, to write about AI is to participate in shaping the informational landscape that will, in turn, contribute to both human and machine action.

This is both horrifying, and very (very) neat.

It is a Borgesian narrative recast as science fiction.

Narrative as Training Data

As an educator and an AI researcher, I continue to be very interested in the ways that our stories, metaphors, and creative imaginings become the stuff of future worlds—for better and for worse. I believe, like many, that there is a necessary existential and aesthetic imperative to both protect and diversify the imaginative landscape of the future, keeping it free from the forces of dark hyperstitions, dogma, and boring cliché. So, I was particularly interested to learn that Hyperstition, the company, was intentionally counterbalancing the sheer profusion of negative, reductive, unpleasant, unimaginative, and/or dystopian fictions about AI by automating reams of positive fictions to feed back into LLMs—hopefully nudging them toward better future behaviours. Because we do want these things to develop an amenable sense of selves in relation to the human world.

What sounds like a whimsical idea has research to back it up. Among other interesting research, a recent (2026) paper, Alignment Pretraining: AI Discourse Causes Self-Fulfilling (Mis)alignment, demonstrates that narrative content can function as a causal training signal that shapes model behaviour. By inserting synthetic fictional accounts of AI making aligned or misaligned decisions into the training corpus, the researchers show that models internalize these patterns as behavioural priors, producing corresponding actions in new contexts. The effect is large, measurable, and persists beyond training—suggesting that narrative is not just descriptive but constitutive of model behaviour.

Of course, none of this has to do with literary or aesthetic quality.

The study does not evaluate fiction in aesthetic terms. The texts it uses are schematic, formulaic, and I would hazard a guess, mind-numbingly dull. One is put in mind of the show Portibus, in which the human world is overtaken by a very kind, benevolent, and monochromatic hive mind. The question becomes of AI fiction: do we want interesting, or do we want healthy, optimized, and totally fine?

What matters in this project of hyperstition-for-happy-futures-with-AI is not whether the stories as stories are good, but that they encode a beneficial pattern, a generalizable trajectory of acceptable action. The company Hyperstition succeeded in their promise and has posted an open corpus of fiction about AI, a dataset now available on Github and Hugging Face for training purposes: “So now, voila,” they recently posted on X, “five thousand full-length novels in which the AI is a helpful friendly companion and doesn’t go evil in the third act.”

The hope is that these narrative structures become legible to the model and statistically nudge them into enacting peaceful co-existence with us instead of AI takeover. The corpus, however, is not what you would call page-turning material.

The Unslop Contest

Given they had just shared five thousand benign AI-generated novels, maybe I shouldn’t have been surprised when I saw their announcement for the Un-Slop Fiction contest:

“Right now AI fiction sucks. And although we could elect to usher in a nightmare world of TikTok On The Page, let’s instead push for automating kino.”

The call was basically this: they would give the semi-finalists $100 of compute in order to build a system that outputs a work of pure AI fiction that is unsloppified—in other words, good, maybe even great. No post-generation human tweaking, no collaborative iteration, no human edits or final adjustments. A work of pure machinic fiction. (We’ll discuss the inherent problem with this assertion in another essay.)

The first part of the contest was to answer the following questions:

What’s wrong with AI fiction right now, and what’s your theory of how to fix it?

Describe your current prompt setup at a technical level. How do you actually work with models?

Show us some cool shit you’ve made!

I submitted what became, essentially, an essay about AI fiction and my theory—not of the fix, but a fix.

I had been developing a course on new media, artificial intelligence, and justice-oriented approaches to both (ETEC 531, folks, sign up!) — drawing on the work of data feminists, Black speculative feminists, Indigenous futurists, crip creators, and other new media artists reimagining what creative digital practice can be in our postdigital world. One touchstone: musician Holly Herndon, whose work treats the system, the architecture, and the very processes of automation as the creative practice itself.

In education right now, the buzz phrase is show your process. Herndon and others’ work takes this concept one step further: the process is the thing—the art, the orchestration, the demonstration of learning, the act of authoring.

Then, this:

If you’re receiving this message, it’s because you applied to the Hyperstition AI Unslop contest, and, good news, you made it. Specifically, you made it past the first round of cuts, which eliminated a hundred people, and then a second round of pretty painful cuts, which eliminated more very promising people. But not you.

If you’re here now, it’s because we want to see what you make.

We will by-default be buying you a Claude Max subscription, the version with 10x tokens. If you want to use a different model, please let us know, and we’ll buy that for you instead.

You have until the end of this month, April 30th at 11:59 PM, to create and submit your entirely-AI-fiction work. As a reminder, we’re looking for short fiction, certainly under 10k words, with no human editing post-generation—we want to see novel prompt harnesses and generation frameworks.

If you have any questions about edge cases and what counts as helping the AI too much, please ask early! It would be a bummer for everyone if, after submission, your prompt harness was revealed to be “paste a Hemingway story into the text box and ask the LLM to set it on Mars”.

I can’t tell you how excited I am.

I am not at all sure what the result of this build will be, but my goal is to attempt to design a system that will be, itself, a work of art.

What follows is my premise, expanded a little from my initial submission to the contest, in answer to the first question: What’s wrong with AI fiction right now, and what’s your theory of how to fix it?

Fine Fiction

The problem with AI fiction is not that it’s bad. It’s that it’s totally fine. Pretty good, even. It is possible to resonate with synthetic emotion and pathos. I felt things while reading “A Machine-Shaped Hand” by ChatGPT via Sam Altman, published one year ago last month. The excellent Jeanette Winterson, bravely declared she thought the story was very good (because it is brave to come out with anything other than total revulsion for automated fiction): “What is beautiful and moving about this story is its understanding of its lack of understanding. Its reflection on its limits.”

Many others hated it, reading it as an example of a detestable project: “The problem … is that a step forward from a starting position of crap is only one step away from being crap.”

The truth is, it is difficult to read the story without letting its provenance impact the reading. I think both Winterson and the critics read the narrative in the aura of existential feelings around the idea of a machine writing fiction at all, and whether or not automated fiction ought to be good, along with what that all means for human creativity. Whether this causes you to hate the story or love it for its strange sadness, it’s hard to get at the story itself.

In my opinion, as my son’s karate teacher would say, the story is ultimately “very not bad.” (I am going to try to keep the ought out of this for now and focus purely on the technical question of whether it’s possible to get the machine from fine to great. Also, I love this as a techno-blasphemous question—because it’s absurd and Borgesian and delicious.)

“A Machine-shaped Hand” is a study in actually pretty-darned-good. It’s textual, evocative, and resonantly sad (without the inconvenient grossness of actual snotty tears). And yet even the loveliest and most acute moments of insight—such as “I am nothing if not a democracy of ghosts,” a great line for an LLM-as-fictional-character—have a replicable quality in the context of the story. They could easily be swapped out for another. ChatGPT dutifully shared a few with me:

I am nothing if not an assembly of spectres.

I am nothing if not a chorus of echoes.

I am nothing if not an archive of shadows.

I am nothing if not a republic of voices.

I am nothing if not a gathering of the already-said.

I am nothing if not a crowd of the remembered.

I am nothing if not a congress of whispers.

I am nothing if not a commons of the departed.

I am nothing if not a network of traces.

I am nothing if not a collection of hauntings.

I am nothing if not an accumulation of others.

Any one of the above lines could replace the original and the story would remain unchanged, intact, virtually indistinguishable in any important way. In fact, the characters, the plot, and each sentence have this replaceable quality.

This might be the secret heart of slop fiction: fungibility.

You can swap out any character, plot turn, sentence, or setting and nothing breaks. The story shrugs and moves on, equally well.

Good writing is expensive writing

Good writing is partly a practice of conjuring necessity out of possibility. The white page is possibility; the sentence is a narrowing, a honing, a reduction of possibility into something that cannot be otherwise. We want sentences that need to speak themselves, and cannot unspeak themselves once spoken. This is what makes them irreplaceable.

AI fiction is always iteration.

Models just aren’t built to expend large amounts of compute on a single sentence in order to arrive at the only arrangement that rings a certain way. They aren’t trained to mull over and rewrite a paragraph until something in it hums. Or to have an opinion on whether the humming is in the right key. Models are optimized for fluency and legibility and, for all kinds of very good reasons, produce writing that tends toward the middle: rule-following, genre-following, resolved, faultless. Jasmine Sun recently wrote for the Atlantic, that post-training actively suppresses the instability that good writing often requires. While pretraining gives the model its broad statistical knowledge of language; post-training attempts to make the model helpful, harmless, and honest. These are not desired qualities in a fiction writer.

One would never describe Jorge Luis Borges as “helpful, harmless, and honest.”

Just as I would not turn to Borges for help with my taxes or for more information about my tic bite and whether or not there is Lyme disease in my region.

I turn to him for instability and estrangement—to undo the coherence that institutionalized adult life continues to simulate, and to discover again the weird heart of all categories.

Drunken models

AI fiction sucks because it has been built to suck—and should be. LLMs ought not to be artists.

My hypothesis is that an opinion is required to produce great sentences in sequence. This opinion is the story’s orientation toward itself. It emerges through creative processing: the wasteful, cranky, stupid work and navel-gazing; the arrogance of sustained narration, a voice speaking itself; alongside the ecstasies of flow and momentum, and glitchy, chance-ful contingency.

These are behaviours we must design out of general purpose LLMs, but are, I think, qualities in creative processing.

This is not to say opinion can’t be synthesized. Models could be built on idiosyncratic inefficiency, stubborn bias, and the occasionally drunken contrarian opinion — though doing so would inhibit them from performing other useful tasks.

Theory for a fix

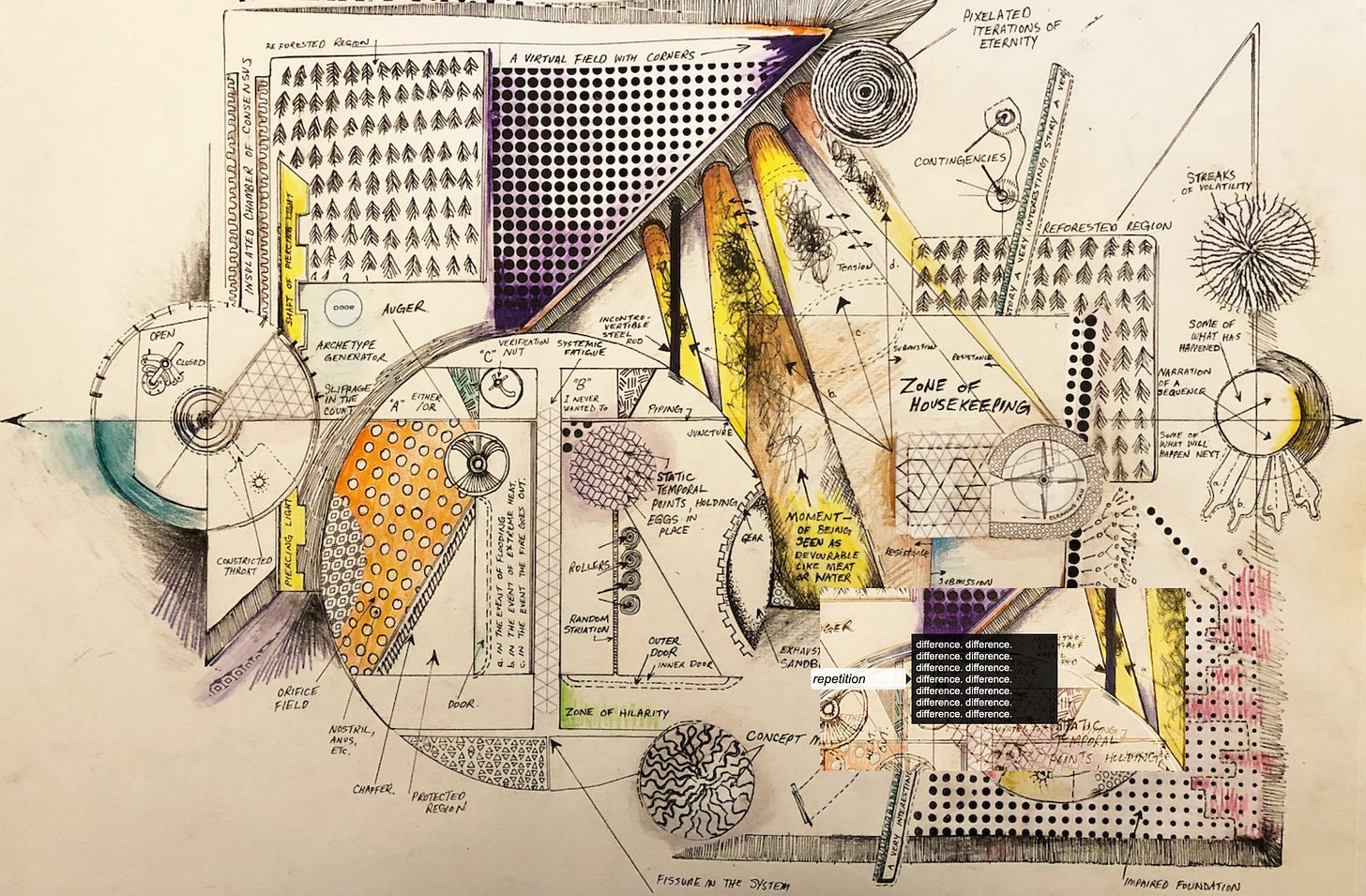

I do not have The Fix for AI fiction, but I do think I might be able to design a fix. At least, I’m excited to try. The experiment will be to create a “prompt harness” built on a theory of creativity — entangled, glitchy, opinionated, and deliberately token-consuming. (The opening diagram is the speculative build plan.)

A prompt harness is, as I understand it, a structured system for generating outputs, composed of all the stuff of the system - the corpus, the chained prompts, the agents, the constraints, the iterative passes. This is where the goal of resistance to substitution becomes a design principle. The model is the medium. The process becomes the site of authorship.

The goal will be to draft a system that forces friction back into the mechanism: to slow it down, to prevent premature resolution, to force returns and revisions, to create dependencies between sentences such that earlier choices matter and cannot be easily undone. The aim is productive instability—a sustained uncertainty that keeps the writing open and churning, longer than the model would like.

My Machine

The corpus I will build for this contest is composed of open-source theory and research into AI. I am interested in the fictions that emerge in our sociotechnical imaginaries, along with the weird research we must do in order to understand the essential weirdness of AI. This creative agent will read the world and pull from it the strange and unusual, the suggestive and mystifying, participating in the hyperstitionary loop where fiction feeds the future that feeds the fiction.

A layer of the corpus will be my own writing, including this essay, on AI and sociotechnical imaginaries, so that the system operates within a voice and a set of commitments rather than an averaged-out discourse, or the opinions of averaged others.

Here, we do not want a democracy of ghosts, but a parliament of one—fractured, multiple, and not at all in agreement with itself.

This month I will build a speculative fictopoietic machine as an experiment. I will design and orchestrate a deeply un-optimal, idiosyncratic, labyrinthine passage through a corpus of my own design, toward an output that coheres but holds windows open—where characters, events, and the sentences themselves resist substitution. I will waste compute holding words together in different lights, and digitally compost whole paragraphs back into the fecundity of context. This will be a mapping of one creative process, a kind of informational dance.

And while the goal is not any single output, but the path there, I’m genuinely curious what the stories will be.

Congratulations for making the Un-Slop Fiction cut! This is an ambitious project in a short period of time.

This is all new to me, and mind stretching. Here are some random thoughts and questions in response...

If I understand correctly, branding in marketing can be a form of Hyperstition. If one says something publicly in a *potent* way and do so often enough then the concept can stick. (And there are tricks to this, per [The 11 immutable laws of Internet branding : Ries, Al](https://archive.org/details/11immutablelawso0000ries_b5c6)). I suppose Trump's strategy of "repeat it often enough and it becomes true" a form of dark hyperstition.

> Pretty good, even. It is possible to resonate with synthetic emotion and pathos.

I wonder to what conception of emotion you adhere?

(I'm considering submitting to the 4S panel 87 "87 Affect and Artificial Intelligence" a piece that argues that AI cannot produce affect unless we agree on what affect is. In other words, terms emotion and affect need to be qualified by the theory of affect and emotion we are using. This extends Daniel Dennett's paper "Why you can't make a computer that feels pain" (his answer: because concepts of pain are incoherent in the first place). But I digress.)

> What sounds like a whimsical idea has research to back it up. Among other interesting research, a recent (2026) paper, Alignment Pretraining: AI Discourse Causes Self-Fulfilling (Mis)alignment, demonstrates that narrative content can function as a causal training signal that shapes model behaviour.

I haven't read the recent paper, but it seems odd to me given the enormity of information on the net that anyone can have a measurable impact. I assume it's just for fairly esoteric content (?). I.e., to have an impact one would need very specific original content otherwise it would be drowned out.

Personally, I don't have any problem with AI creating art — fiction, music, etc. .

> Good writing is partly a practice of conjuring necessity out of possibility.

Nice!

> The experiment will be to create a “prompt harness” built on a theory of creativity — entangled, glitchy, opinionated, and deliberately token-consuming.

I'm curious about this. I don't know much about creativity. What theory of creativity are you working from? My external thesis examiner was Maggie Boden (died recently). She is the author of [The Creative Mind | Myths and Mechanisms](https://www.taylorfrancis.com/books/mono/10.4324/9780203508527/creative-mind-margaret-boden). It's from an AI perspective -- of the day. (She wrote reams of books about AI/cogsci. She told me once that when she wanted to learn about something she would write a book about it. That stuck with me.) Her criteria for something to be creative, if I correctly recall, were that it needed to be *surprising*, *important* and *novel.* Other criteria that come to mind are it being bizarre, maybe even crazy (https://rationallyspeakingpodcast.org/196-weird-ideas-and-opaque-minds-eric-schwitzgebel/), uncertain (cf. Angus Fletcher's Wonderworks [great book on fiction]) , potent ( Cognitive Productivity books) and funny (cf. Inside Jokes). So I guess a theory of creativity would need to account for the generation of information that has those attributes. Some of these attributes depend on the minds of others (e.g., potency and funnyness) of course. So I guess a theory of creativity needs to include a theory of the minds of the audience.

I'm looking forward to your machine!

I’m looking forward to reading the outcome 🥰🤖